Deep Customization: Building a Private AI Development Pipeline with OpenClaw + VPSMAC

In 2026, generic AI development tools are no longer enough for developers and enterprises who need to protect their core intellectual property. How do you build a development environment that leverages LLM intelligence while maintaining hardware-level data isolation? This article explores building a 24/7 autonomous, private AI development pipeline using OpenClaw's automation and VPSMAC M4 bare-metal clusters.

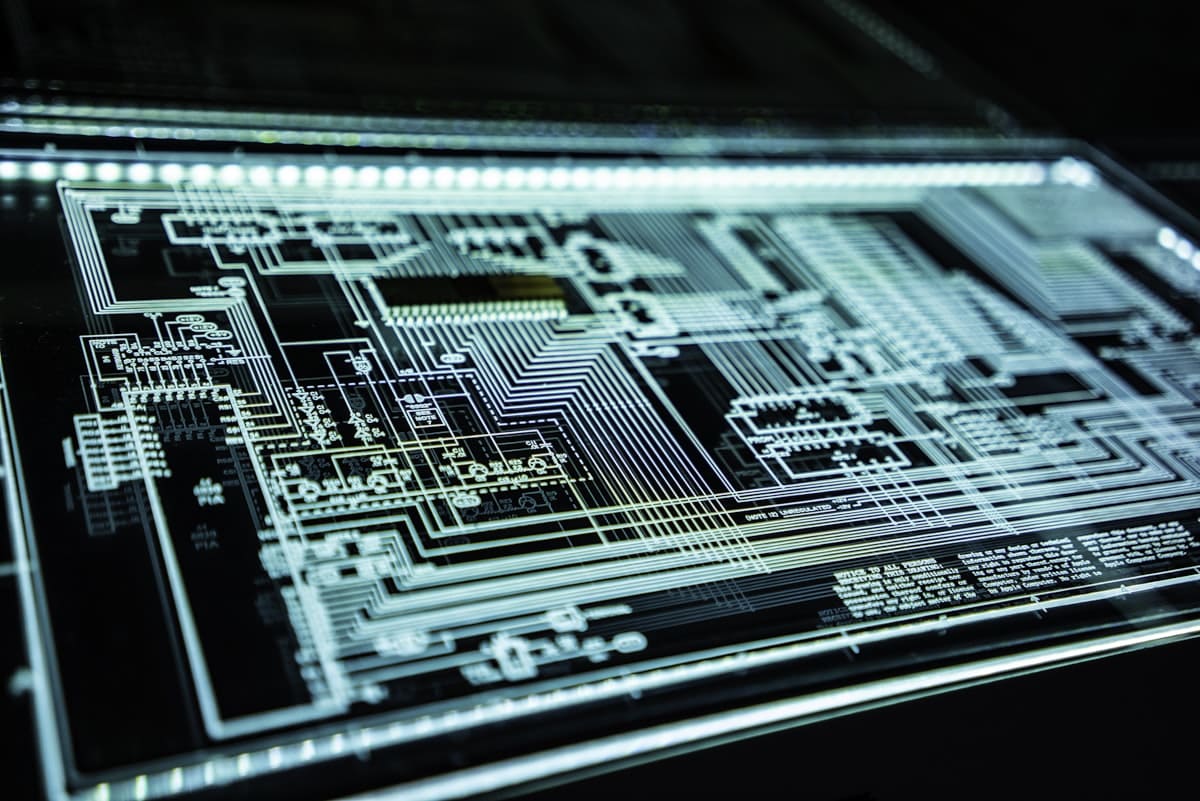

The Era of Code Sovereignty

Every time a developer pastes core logic into a public AI platform, a bit of code sovereignty is lost. In 2026, leading teams are shifting back to "privatization." By utilizing independent bare-metal Mac nodes from VPSMAC combined with OpenClaw's GUI-level automation, you can keep your entire pipeline—from logic generation to hardware testing—entirely within a private network.

Phase 1: Designing the Pipeline Architecture

A mature private AI pipeline consists of three core layers: Inference, Execution, and Verification. Within a VPSMAC cluster, we can achieve load balancing across different node configurations.

Private Inference Nodes

Deploy DeepSeek-Coder or Llama-3 via Ollama on an M4 instance with 64GB RAM. All code prompts remain within your local network, preventing public exposure.

OpenClaw Co-Execution (GUI Automation)

OpenClaw takes over macOS GUI permissions to automate Xcode archiving, certificate configuration, and complex plist modifications.

Bare-Metal Testing

Leveraging VPSMAC's real hardware environment, AI agents automatically run simulators or operate physical interfaces for regression testing, feeding screenshots back to an audit node.

Phase 2: Deep Integration with Local IDEs

To be truly useful, the pipeline must sense your local changes. We recommend mounting an encrypted tunnel for your local codebase onto the VPSMAC instance.

Configuring an Automated Compilation Sentinel

We can write an OpenClaw task script that listens for file changes and automatically triggers high-speed compilation on the M4 chip.

# openclaw_watch.py import openclaw from openclaw.events import FileWatcher def on_code_change(event): "Triggered when local code is pushed to VPSMAC" agent = openclaw.Agent(name="Compiler") agent.run_shell("xcodebuild archive -scheme MyPrivateApp") agent.notify_dev("Build complete. Starting AI static scan...") watcher = FileWatcher(path="~/project/src") watcher.on_modified(on_code_change) watcher.start()

Phase 3: Scaling with M4 Clusters

The power of the pipeline lies in its horizontal scalability. When you need to test 10 different iOS versions simultaneously, you can launch 10 M4 Pro instances with one click in the VPSMAC console. OpenClaw's cluster management allows a master node to distribute test instructions to all workers and aggregate GUI error reports.

Comparison: Traditional CI/CD vs. VPSMAC AI Pipeline

- Traditional CI/CD: Limited to script execution; cannot handle complex UI interactions (e.g., IAP testing, 2FA prompts).

- VPSMAC + OpenClaw: AI agents possess visual recognition, allowing them to interact with pop-ups like a human and handle edge-case exceptions.

Phase 4: Physical Zero-Trust Security

In a private pipeline, VPSMAC's physical isolation is critical. Without a hypervisor layer, all intermediate caches, dSYMs, and keys stay in physical memory. By configuring OpenClaw's Memory-Only Secrets mode, all traces are wiped immediately upon power-down.

Conclusion: Redefining Indie Productivity

This pipeline is designed to free developers from the tedium of "compile, wait, click-test, resubmit." With VPSMAC M4 clusters and OpenClaw, you have a guardian and executor for your codebase. This private solution isn't just a technical upgrade; it's the ultimate respect for developer creativity.

Build Yours Now: Log in to the VPSMAC console, select your M4 cluster nodes, and start your automated private development era.