2026 OpenClaw Gateway Troubleshooting: The status → logs → doctor Ladder (Port 18789 & Channel Side)

Power users rarely fail at install—they fail when the gateway looks healthy, the CLI responds, but channels stay silent, tools do not fire, or the control UI flakes. This guide enforces order: prove the control plane with openclaw status, collect evidence with openclaw logs in a bounded window, run openclaw doctor (optionally --fix) only after backup, then patch surgically. It isolates port 18789 conflicts, pairing/auth expiry, and connected-but-no-reply channel issues from model-side 429s, and closes with Mac cloud 24/7 habits—SSH tunnels to reduce exposure instead of publishing the port raw on the internet.

Key points

1. Why ladder order matters

A single “runtime: running” line does not prove 18789 is listening correctly, that pairing is still valid, or that IM webhooks/long-polls actually deliver. Jumping to model tweaks before status, or running doctor --fix before reading JSONL in order, frequently mislabels channel permission bugs as “lazy models” and mislabels remote URL drift as provider rate limits. Minimum discipline for 2026 ops: capture status plus a time-bounded log slice before any restart so you can tell transient noise from config drift.

Layering matters because the same timeout message can come from different planes: gateway RTT to an upstream, an IM callback blocked by a corporate firewall, or a stalled provider socket. Tag incidents in your ticket as control / data / channel so postmortems line up with timestamps instead of mixing unrelated failures. When executives ask for a one-line root cause, you can still answer—but your internal notes should preserve which plane failed first.

2. Pain layers: control, data, channel

- Control plane: In

gateway.mode=remote, a local process may idle while you think it is serving; verify bind addresses, listeners, and probes—not just runtime. - Data plane: Without a fixed log walk order you drown in INFO heartbeats; filter WARN/ERROR first, then expand by request id. Prefer structured JSONL when available.

- Channel plane: “Connected but silent” often maps to mention rules, group scopes, expired pairing, or bot permissions—signals that may not surface as ERROR in

doctoralone.

The three-layer lens stops reflexive temperature tuning when the webhook never reached your process. Teams routinely burn hours “making the model more responsive” while a Slack or Discord app manifest is missing a single OAuth scope—evidence that belongs in the channel plane, not the model plane.

3. Symptom routing table

| Symptom | Suspect layer | Do not do first |

|---|---|---|

| Local curl to 18789 fails | Control | Rename the model |

| doctor reports invalid JSON5 keys | Config | Delete entire state dir |

| DM works, group chat silent | Channel | Tweak temperature |

| Provider 429 streak | Model/quota | Restart loop |

| Pairing works then dies in minutes | Channel + clock | Reinstall npm only |

During escalations, use the table as a guardrail: if curl to loopback fails, do not rotate channel tokens until the listener is proven. If DMs work but group chats do not, stay in the channel plane—compare mention policies, bot membership, and workspace IDs side by side.

4. Five+ steps: status → logs → doctor → targeted fixes

Use the same order on Linux, macOS, or Mac cloud. For Docker, run commands in the same namespace to avoid split-brain conclusions between host and container. If you must compare host vs container, run openclaw doctor in both and diff only after confirming the same config files are mounted read-write where expected.

- Baseline

openclaw status: Record runtime, gateway mode, bind; if remote, record upstream URL and health—not just “running.” - Evidence

openclaw logs: Capture 10 minutes before/after upgrades; filter WARN/ERROR, then correlate by request id; fix TLS/DNS before tokens. openclaw doctor: Read suggestions without--fixfirst; backup~/.openclawor mounted configs before auto-fix.- Port 18789: Identify the owning process; prefer loopback bind plus SSH tunnel or reverse proxy over exposing raw ports.

- Channels: Run channel status/probe commands per your version; re-pair if expired; validate bot scopes and mention rules for groups.

- Regression: Validate with a minimal DM probe, then tools, then full traffic.

When incidents repeat weekly, archive “known good” status output and a redacted log slice in your runbook. Future on-call engineers can diff against those baselines instead of guessing whether a new warning is benign noise or a regression introduced by a dependency bump.

5. Hard facts: port, pairing, log keys

- 18789: common default local gateway port—confirm against your release notes; conflicts often mean duplicate instances.

- Pairing TTL: codes expire; renew promptly on the IM side to avoid half-paired states.

- Correlation keys: keep request id, channel id, and provider error codes for cross-check with token billing.

- Upgrade/rollback: if doctor flags migration items post-upgrade, follow release notes before downgrading; keep log excerpts for postmortems.

- Audit: document JSONL paths and rotation—often seven-day minimum for triage (adjust for policy).

- Docker: if doctor differs host vs container, check volume mounts and uid before touching channel secrets.

- Clock skew: VM or container clocks that drift break TLS handshakes and OAuth-style tokens; verify time sync on host and inside the container before blaming OpenClaw binaries.

- Retry storms: when a channel is flaky, unbounded retries can flood logs and hide the first actionable line; after recovery, consider a controlled restart during a change window and compare log line counts before/after.

- Org-wide concurrency: multiple agents hitting the same provider from one egress IP can trip shared rate limits; correlate provider 429s with other services before raising gateway concurrency.

6. Mac cloud, tunnels, and limits of cheap hosts

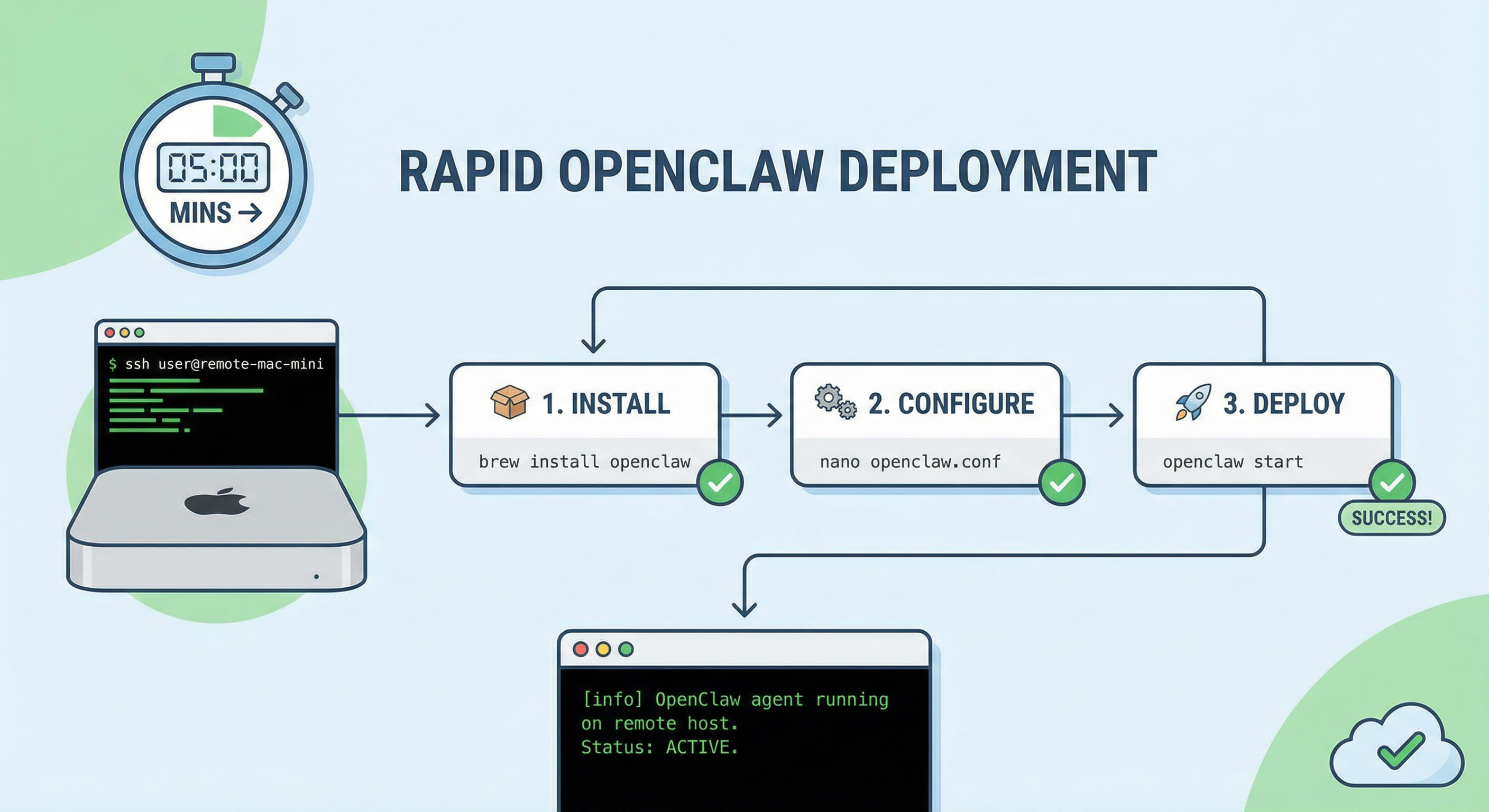

Running on a laptop versus a 24/7 Mac cloud changes supervision, log persistence, and exposure. Browser-only access without a tunnel breaks across reboots; publishing 18789 publicly invites scanners and misconfig risk. SSH tunnels or zero-trust overlays shrink the blast radius but still need key rotation and reconnect logic. Windows or budget Linux VPS may work for experiments, yet long-term daemon reliability, toolchain parity, and Apple-adjacent automation suffer. When you need native macOS, launchd-friendly supervision, and the same triage ladder without extra abstraction, renting a dedicated VPSMAC Mac cloud node is usually more predictable than stacking workarounds on non-Mac hardware. For first-time wiring, continue with the on-site five-minute OpenClaw deploy article to move from “runs once” to “survives on-call.”

That contrast is deliberate: Docker on Linux can host OpenClaw, but you pay in volume uid mapping, dual config truth, and extra moving parts during incidents. A Mac cloud node aligns with how most OpenClaw operators already think about paths, keychains, and GUI-less automation—fewer translation layers between documentation and production behavior.

Finally, treat provider dashboards and IM vendor consoles as part of the same evidence chain: screenshot or export rate-limit graphs when disputes arise about whether the gateway or the upstream model was slow. Pair those exports with request ids from your JSONL so finance, security, and engineering share one timeline instead of three conflicting stories.

Keep this article beside your upgrade checklist: every time you bump OpenClaw or the gateway image, rerun the ladder in staging before touching production—even when release notes look boring, subtle defaults around bind addresses and auth refresh windows still break sleepy Sunday deploys.