2026 OpenClaw Secure Deployment Guide: Run AI Agents Safely on Mac VPS (CVE-2026-25253, ClawHub Review, Ollama 14B)

If you are moving OpenClaw Gateway plus Telegram or Discord bots to the cloud but worry about CVE-2026-25253 listener issues, ClawHub Skill expansion, and Ollama 14B fighting your gateway for RAM, this article gives Runbook-ready structure: pain points, a Mac VPS versus Linux-only matrix, seven launchd-centric steps, hard metrics, and FAQ.

1. Pain breakdown: "it installs" is not the same as "safe 24/7"

- CVE-2026-25253 style bind-surface risk: vendor advisories for OpenClaw-class gateways in 2026 repeatedly pair two issues: a listener that is too promiscuous by default and an upgrade path that can be swapped if you pull from unofficial mirrors. On crowded hosts or messy port forwarding, internet-wide scanners can hit your control plane within minutes; if you still rely on repackaged scripts, broken checksum chains stretch your patch window on purpose or by accident.

- ClawHub third-party Skill supply chain: one-click installs drag implicit outbound network paths, writable directories, and

execaffordances. Without a minimum-privilege dry run, sharing~/.openclawbetween production and experiments means a hot reload can promote an unaudited Skill into the same context as live customer traffic. - Ollama 14B fighting the gateway for memory: on Apple Silicon unified memory, model weights and the gateway working set share the same bandwidth pool. If you never cap batch concurrency, long-context spikes slow health checks first; operators misread the symptom as "the channel is flaky" instead of memory pressure short of a hard OOM.

- Telegram / Discord permanence and secret drift: storing a Telegram bot token in an interactive shell profile while launchd reads a different plist is a classic split-brain failure: warm sessions work, cold boots do not. Multi-user SSH adds Keychain unlock races and mismatched HTTP proxy variables for outbound tool calls.

The goal is one Runbook that covers install, hardening, observability, and rollback: freeze the medium, converge listeners, audit Skills, budget the model side, and keep IM credentials in a single authoritative place.

2. Decision matrix: native Mac VPS versus "Linux plus containers only"

Use this table in architecture reviews when the bar is auditable and reversible, not merely "demo works on my laptop".

| Dimension | Apple Silicon Mac VPS (native + launchd) | Linux host, containers only |

|---|---|---|

| Official install and patch cadence | Tracks upstream macOS releases; bind hashes to pkg or install scripts | You rebuild kernel, runc, and desktop-ish dependencies; patch windows scatter |

| CVE mitigation (listen / upgrade) | Tighten loopback plus SSH tunnel or terminate TLS at a reverse proxy; one plist environment | Port maps and host iptables stack; longer triage chains |

| ClawHub Skills | Co-locate auditing with Keychain, logs, and sandbox directories | Volume uid mismatches create silent half-writes to config JSON |

| Ollama 14B | Schedule large working sets under UMA; tune concurrency and batch size | CPU-only inference or split VRAM; worse tail latency |

| Telegram / Discord 24/7 | launchd keeps services alive with stable log paths | systemd without mac-native tooling if you still need Apple-side utilities elsewhere |

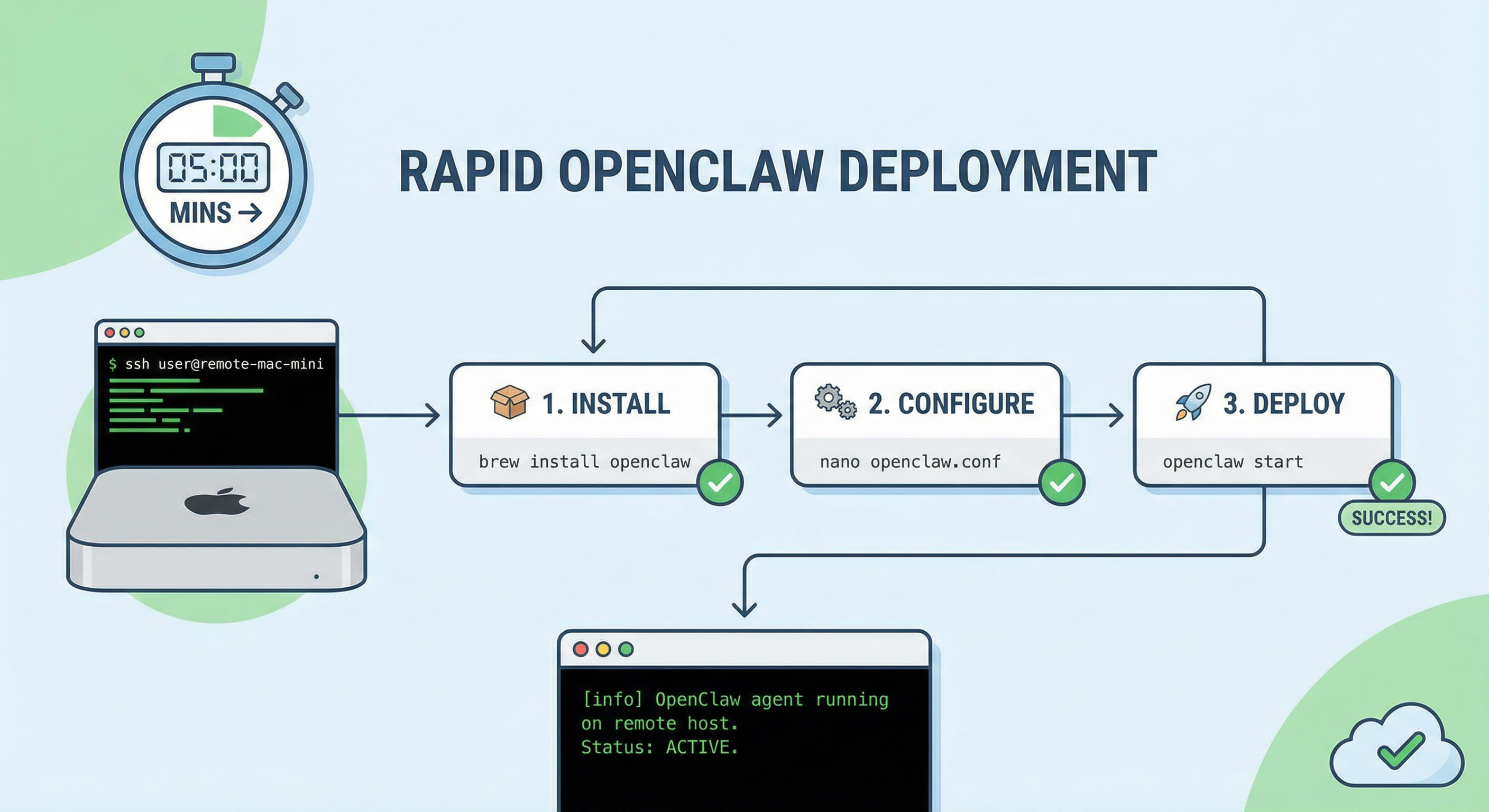

3. Seven-step runbook: frozen medium to channel smoke test

- Freeze the node baseline: record macOS minor version, Xcode CLT presence, timezone, and NTP health. Give the gateway a dedicated account so

HOMEnever collides with an engineer's interactive shell. - Pick an official medium and verify it: document whether you use the vendor-recommended

install.sh,npm -g, or a Docker image with a pinned digest; reject silentlatestdrift in production. - Apply CVE-2026-25253 mitigations: upgrade to a release that contains the vendor fix; bind the control plane to

127.0.0.1:18789or place TLS termination in front; within 24 hours of the advisory, force-rotate gateway tokens and keep the change ticket id next to the version bump. - Minimal ClawHub footprint: install only business-critical Skills; unpack tarballs offline and grep for

curl,eval, and suspicious outbound hosts; default toexecgates and require two-person review for batch automation exceptions. - Budget Ollama 14B: set soft RSS caps and concurrent session ceilings; split log files so gateway JSONL never interleaves with model stderr during incidents.

- Inject Telegram / Discord secrets once: prefer launchd

EnvironmentVariablesor a plist-referenced secret file; never commit tokens or paste them into screenshots. - Smoke test and rollback: after

openclaw doctoror equivalent schema checks, send a round-trip chat message and a tool-call probe; keep the last known-good global install and plist pair so you can rip out a bad Skill and downgrade the gateway inside your RTO target.

4. EEAT-friendly numbers you can paste into an acceptance sheet

- Default control-plane port: community triage still centers on

18789; multiple gateway instances on one Mac VPS will fight silently—alwaysbootoutthe old job first. - UMA headroom for 14B: on a 24 GB M4-class rental, keep roughly eight to ten gigabytes of effective headroom for macOS plus the gateway before loading a 14B Q4 bundle; prefer lowering concurrency over living in swap.

- Logging and alert thresholds: track tool error rate and channel reconnect bursts; if Skill calls spike abnormally inside five minutes, flip a read-only mode flag while you investigate.

- Rotation cadence: rotate bot tokens and gateway API keys at least quarterly; for advisory-grade CVEs, schedule an emergency rotation within twenty-four hours and diff logs before and after.

- Disk budget per job: reserve roughly twelve to twenty gigabytes of free space per concurrent iOS-adjacent automation job when you also store model weights locally; DerivedData-style churn from side tools can still steal inode headroom on smaller VPS disks.

Operators sometimes mirror the same gateway JSON to a staging Mac mini and a production Mac VPS; keep a checksum diff in CI so a stray experimental Skill never ships upstream silently.

5. FAQ: how does this differ from Docker-only laptops?

Q: After the CVE patch, what else must change? Re-audit listeners, reverse-proxy ACLs, stale plist paths, and orphaned binaries; partial fixes behave like no fix.

Q: May ClawHub Skills auto-update? Avoid silent auto-update in production; pin tags or commits and align with your image digest policy.

Q: Same user for Ollama and the gateway? Acceptable if Runbook documents RSS soft caps and log isolation; you must still be able to disable the model side independently during an incident.

Q: Must we run both Telegram and Discord? Pick one primary channel and use the other for paging; dual delivery can duplicate tool executions if misconfigured.

Keeping production OpenClaw only on a developer laptop or on "whatever cheap Linux VPS runs Docker" saves money for a week but borrows against sleep policies, port-forward entropy, and kernel cadence mismatches. Docker adds helpful isolation yet still introduces volume permission puzzles and an extra triage layer. If you want an AI agent that is auditable, reversible, and truly always-on in 2026, co-locating the gateway and Ollama on a dedicated, SSH-friendly Apple Silicon Mac cloud with native paths plus launchd is usually calmer than forcing macOS-shaped workflows onto generic Linux. After you finish the seven steps, cross-link your internal Runbook with VPSMAC's longer hardening and ClawHub audit articles; when you need predictable compute and contract-grade operations, renting VPSMAC Mac nodes is typically the cleaner way to keep listener convergence, disk for logs, and token rotation inside a standard operations envelope instead of a personal laptop's ad hoc setup.