2026 OpenClaw with Ollama on Mac Cloud: Provider Setup, Health Checks, and Cloud Fallback

Who and what breaks: Teams already running OpenClaw with cloud API keys want Ollama on the same Mac host for lower latency and predictable spend, but production turns into endless Thinking states, mystery HTTP 500s, and debates about whether Slack or the gateway is guilty.What you get here: A co-located versus split topology table, concrete steps for base URL and model strings, timeout and retry guidance, a secondary cloud provider as a budgeted fallback, and a doctor-first triage order.Shape of the article: Numbered pain list, two matrices, seven implementation steps, hard metrics you can paste into reviews, a bridge paragraph on why bare-metal Mac cloud wins over patched Windows or nested macOS, plus FAQ and HowTo JSON-LD. Exact configuration keys follow your OpenClaw release notes.

In this guide

1. Summary: gateway, Ollama, and cloud keys

OpenClaw Gateway owns routing, tools, and instant-messaging channels while language models may be satisfied either by hosted APIs or by a local HTTP surface. Ollama typically listens on 127.0.0.1:11434 and exposes chat-compatible endpoints. The expensive mistakes are not philosophical debates about open weights; they are mechanical mismatches between the model string you typed in a UI and the tag returned by ollama list, or a containerized gateway that resolves localhost to itself instead of the host that actually runs ollama serve. Timeouts shorter than model cold-start routinely produce false negatives that look like flaky intelligence. Treat provider configuration as infrastructure-as-code: store the base URL, model identifiers, and timeout tiers in the same repository as your gateway manifests so rollbacks do not depend on tribal memory in a Slack thread. This guide assumes SSH access to an Apple Silicon Mac cloud instance with a working baseline gateway install and focuses on making local-first plus cloud-fallback a documented runbook rather than a one-off tweak.

2. Pain points: ports, names, timeouts, channels

Support threads cluster around four themes:

- Bind address mismatch: Ollama loopback-only while the gateway runs in bridge networking, or accidental public exposure of inference ports without authentication.

- Model string drift: Missing

:latest, wrong registry prefix, or case errors yield 404 responses that channels surface as silence. - Timeout versus queueing: Multiple concurrent sessions on a 7×24 node stretch queue depth; aggressive HTTP deadlines trip before weights finish loading.

- Fallback policy gaps: No secondary provider means hard failures for users; unconstrained fallback to cloud keys during incidents can invert cost savings.

Operational hygiene matters as much as YAML. Rotate API keys for cloud fallbacks on the same cadence as database credentials, and annotate incidents with the provider that actually served traffic so finance can reconcile spikes. When multiple engineers can SSH into the same Mac node, pin Ollama upgrades behind a change window because a silent binary bump can shift default bind behavior or tokenizer quirks that only appear on long multi-turn threads.

3. Topology matrix: co-located versus split Ollama

| Dimension | Ollama co-located with gateway | Ollama on a separate host |

|---|---|---|

| Latency | Loopback or host bridge, lowest RTT | Requires stable private IP or service DNS |

| Isolation | Process-level; great for single-tenant Mac cloud | Blast-radius separation; ops overhead rises |

| Exposure | Never publish 11434 to the public internet raw | Restrict security groups to gateway sources only |

| Debugging | Local curl suffices | Multi-segment traces, possible mTLS |

| Fit | PoC through medium concurrency | Platform teams splitting GPU-heavy models |

If you split Ollama onto a second Mac or a GPU-heavy box, prefer private RFC1918 addressing with explicit firewall rules over ad-hoc SSH tunnels that disappear when someone reboots the bastion. Record which subnet the gateway uses for outbound calls and whether mTLS is required; misaligned TLS trust stores masquerade as model quality regressions because the gateway silently retries with a degraded path.

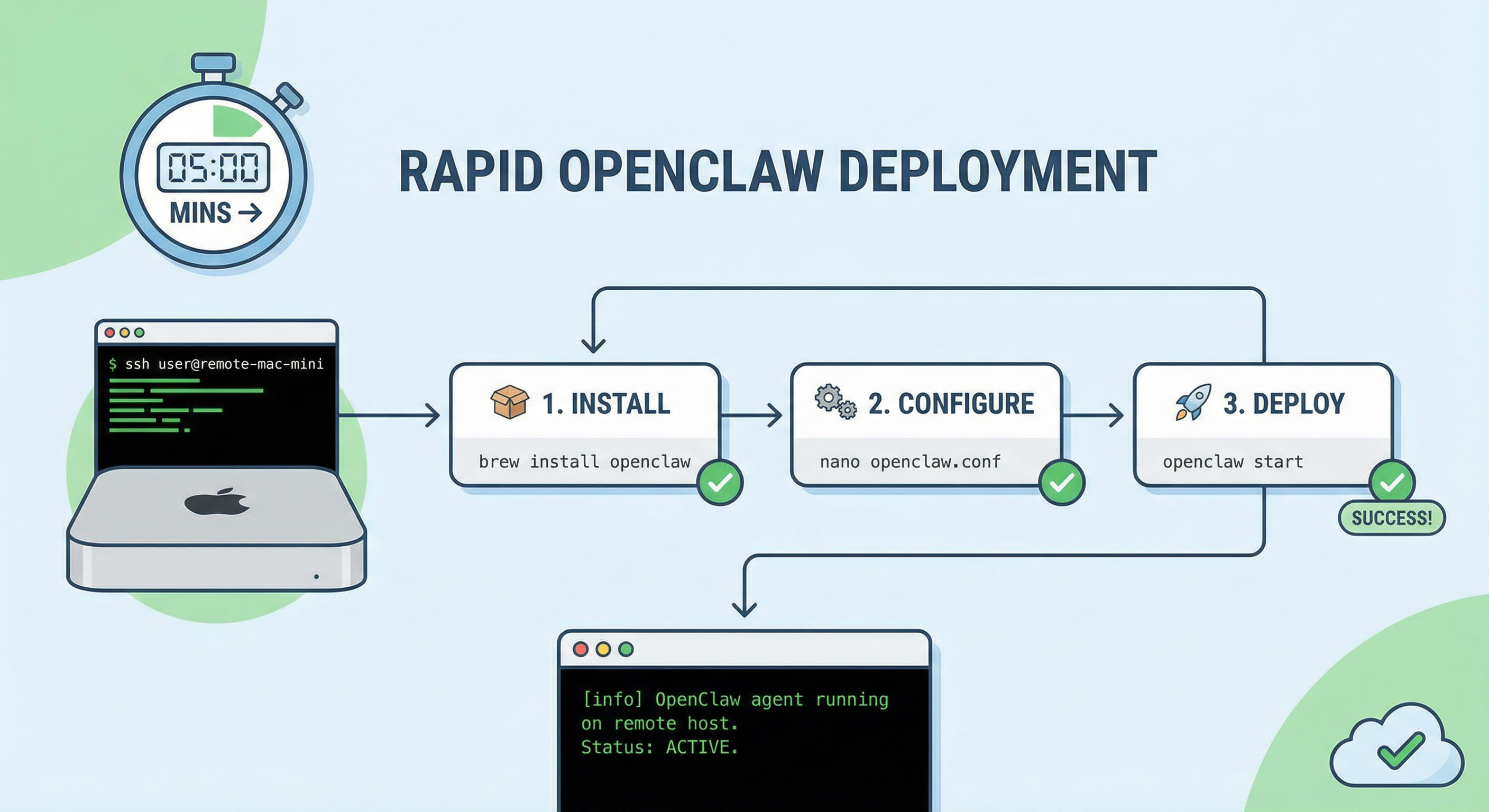

4. Seven steps from empty config to fallback-ready

- Install and pull models: Verify with

ollama --version, pull weights, capture exact names fromollama list. - Health check: Curl

http://127.0.0.1:11434/api/tagsor the health route your version documents. - Register the local provider in the gateway: Set base URL appropriate to container networking, model string, and whether you use OpenAI-compatible shims.

- Layer timeouts: Separate connect, first-byte, and end-to-end ceilings to absorb cold start without starving channel heartbeats.

- Add cloud fallback: Configure a secondary hosted provider with per-incident token or spend caps.

- Supervise processes: Use

launchdor equivalent ordering so Ollama starts before the gateway accepts traffic; wire log rotation. - Validate: Run three identical prompts, capture time-to-first-token and error rate, then add concurrent sessions to observe queueing.

Quick smoke commands:

After wiring providers, run a synthetic load script that mirrors your busiest channel: short factual questions, long summarization jobs, and tool-augmented turns if your stack enables them. Capture p50 and p95 time-to-first-token separately because marketing demos optimize for medians while on-call pain tracks tails. If you must expose any inference surface beyond loopback, terminate TLS at a reverse proxy with mutual authentication or IP allow lists; raw public 11434 is an invitation to crypto miners and accidental data exfiltration.

5. Hard metrics: memory, concurrency, timeouts

Use these ranges in planning decks; always reconcile with the exact quantization you deploy:

- Unified memory: A 7B-class Q4 model often needs on the order of five to six gigabytes of resident weights; running gateway plugins plus a second model on thirty-two gigabytes or less invites swap thrash under burst chat load.

- Concurrency model: Treat local inference as a finite queue. A common pattern is one active local model with overflow to cloud rather than unbounded parallel local jobs.

- Timeout budgeting: Allow tens of seconds for first token during cold start; if steady-state p95 remains above product requirements, shrink model size or concurrency before you simply lower timeouts to hide latency.

- Disk hygiene: Multiple model versions consume tens of gigabytes; pair

ollama rmwith disk alerts on rented Mac nodes. - Observability: Log model name, duration, and whether fallback fired per request so finance can compare savings versus availability.

- Reliability budgeting: When swapping spikes, link phases stretch even if CPU looks idle; correlate with

vm_statbefore blaming the LLM stack.

Capacity planning should include seasonal traffic: marketing pushes and incident bridges multiply concurrent sessions faster than average daily charts suggest. Keep a cold-standby smaller model configured in the gateway so you can flip traffic during weight downloads or disk cleanup without opening a wide cloud spigot. Document expected watts and thermal behavior if you colocate near other services; Apple Silicon efficiency helps until sustained all-core load meets inadequate chassis airflow in dense racks.

6. Troubleshooting: doctor, logs, channel FAQ

Follow a fixed ladder: openclaw doctor, gateway logs for the message timestamp, Ollama process health, then channel pairing rules. If users see Thinking with no reply, confirm whether HTTP completed; if HTTP succeeded yet chat is empty, inspect rate limits and mention policies. Use the symptom matrix below in postmortems.

| Symptom | Check first | Then check |

|---|---|---|

| Immediate 404 | Model string versus ollama list | Wrong base URL or container localhost |

| Intermittent timeout | Cold start and queue depth | Swap and disk pressure |

| Channel silence | Gateway swallowed tool errors | Webhook or bot scopes |

| Bill spike after fallback | Retry storms to cloud | Missing caps on fallback window |

Running macOS workloads on generic Windows desktops or unlicensed nested virtualization trades short-term hardware savings for chronic compatibility and graphics stack debt. Docker-only stacks add volume UID puzzles and extra DNS hops that show up only under 7×24 load. When you need Apple Silicon vector performance, native launchd supervision, and a single SSH surface for both OpenClaw and Ollama, renting dedicated Mac cloud capacity usually beats stacking fragile heterogenous layers. Pair that compute with the VPSMAC quick-start articles so provisioning feels closer to ordering a VPS API than to a science project.

Finally, rehearse failure modes quarterly: kill the Ollama process intentionally, saturate disk, and revoke a cloud key to confirm alerts and fallback caps behave as designed. Tabletop exercises beat discovering blind spots during a customer-visible outage. When documentation, metrics, and drills align, local inference stops being a science fair demo and becomes a boring, billable service.