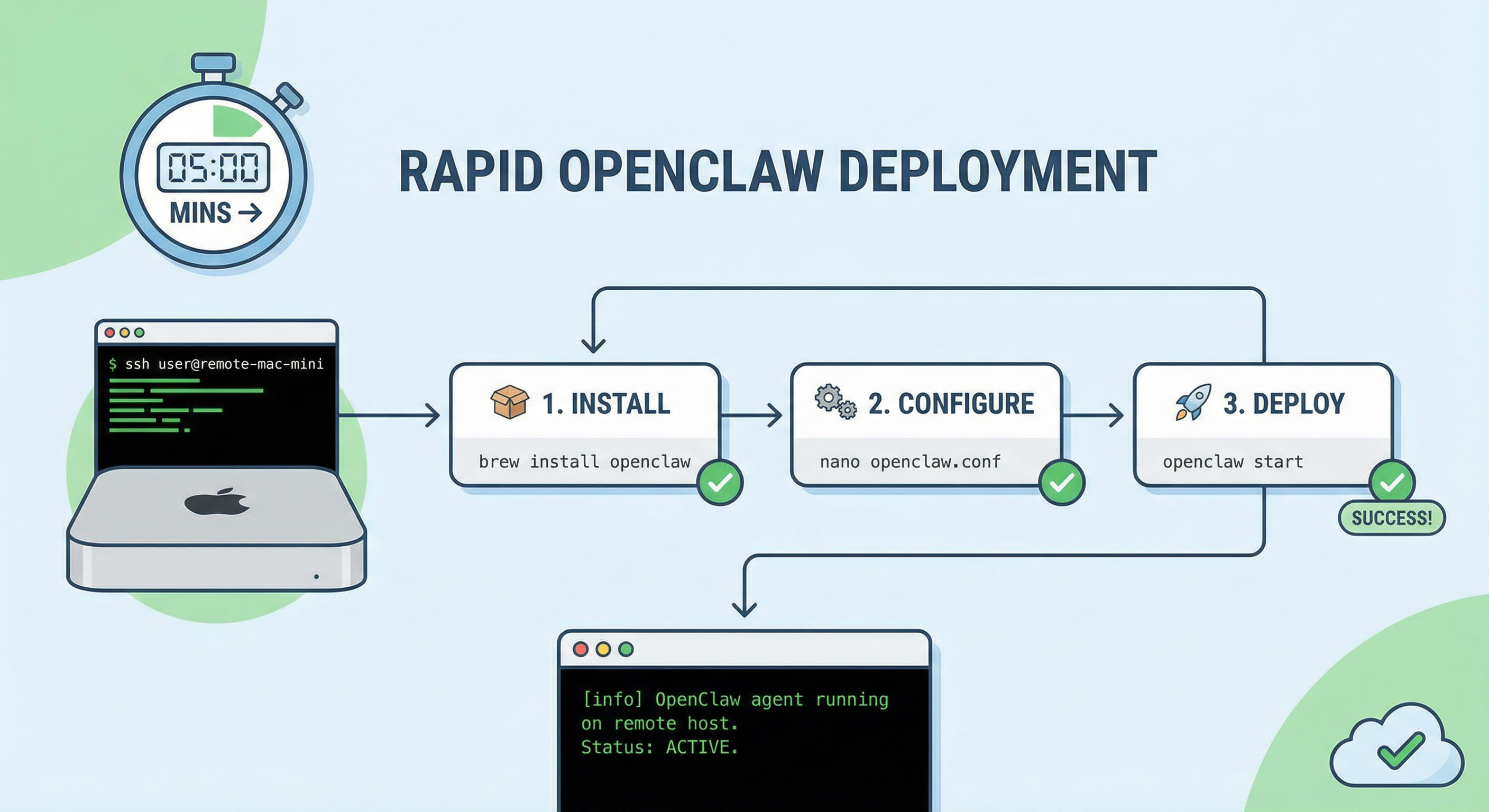

Manuel de jeu OpenClaw « Channel Online But No Reply » 2026 : couplage, requireMention, intentions Discord / autorisations Slack et journaux de passerelle en couches (Mac VPS 24/7)

Les opérateurs sur les passerelles Mac nues voient souvent des tableaux de bord verts tandis que les utilisateurs perçoivent un trou noir. Cet article s'adresse aux équipes 2026 exécutant OpenClaw sous launchd sur des hôtes Mac de style VPS : il sépare le tri en trois couches : la politique de messagerie instantanée et de bot, la livraison et l'authentification de la passerelle, et les erreurs de modèle ou de quota - afin que vous arrêtiez d'échanger les clés API avant de lire les files d'attente d'appariement. Vous obtiendrez une matrice de symptômes, une échelle de sonde en six étapes, trois mesures que vous pourrez coller dans les examens d'incidents et des données structurées de FAQ qui complètent les guides VPSMAC existants sur l'appariement des mentions, le silence des battements de cœur et le routage Anthropic 429.

Dans cet article

1. Three pain classes: online is not end-to-end healthy

Mature on-call rotations distinguish whether the instant messenger handed an event to your bot, whether the gateway accepted it for a session, and whether the model returned a completion. A single green LED on a dashboard rarely proves all three.

- Policy-layer drops:

requireMention, per-room overrides, or thread-only modes discard messages before model invocation. Direct messages look fine while group chats feel dead. When behavior differs per room, suspect stale per-channel overrides instead of a global outage. - Pairing and trust queues: New senders may sit in pending until an operator approves. Probes can still succeed because heartbeats and OAuth handshakes use different paths than user-authored payloads. Post-upgrade regressions often trace to defaults that quietly re-enabled stricter pairing.

- Platform permissions and intents: Discord without Message Content Intent, bots missing channel membership, or Slack apps reinstalled without restoring the full event subscription bundle can yield inbound flakiness or silent outbound drops while CPU stays flat—nothing like an OOM storm.

On Mac VPS hosts under launchd, add a fourth shadow class: environment drift between interactive SSH shells and the daemon plist. The same openclaw binary may read different config paths when HOME or PATH diverges, producing contradictory green checks.

Incident commanders should keep two runbooks: one that stops at channel probes and platform portals, and another that continues into model logs. Mixing both on one page trains responders to rotate API keys first, which rarely fixes mention rules.

2. Symptom matrix

Use the table verbatim in bridge calls so product and infra argue about the same layer.

| Symptom | Primary layer | First proof | Usually not |

|---|---|---|---|

| Groups silent, DMs fine | Mention or room policy | @ bot A/B message | Global model outage |

| New users silent, veterans fine | Pairing backlog | pairing list | Disk full |

| Guild channels only broken | Discord intents or roles | Developer portal checklist | Random port 18789 flaps |

| Slack intermittent ghosting | Event URL or workspace install scope | Re-auth plus delivery logs | Temperature tuning |

| All channels plus 429 strings | Provider quota | Vendor console and downgrade plan | Wiping pairing entirely |

3. Six-step ladder from probe to model split

Execute one variable per maintenance window and capture timestamps for each command snippet.

- Channel probe:

openclaw channels status --probeto validate RPC plus channel handshakes. Failures here stop the story in the gateway layer. - Pairing audit:

pairing listfor pending rows; document who may approve and how on-call inherits that duty during vacations. - Policy export: Dump messaging-related flags, explicitly annotating

requireMention, allowlists, and thread rules; reconcile with product copy about whether @ is mandatory in public rooms. - Platform permission matrix: Discord intents and channel visibility; Slack scopes including

chat:writeand event subscriptions pointing at the current gateway ingress. - Gateway self-check:

openclaw doctorplusopenclaw gateway status(add--deepwhen policy demands); compare launchdEnvironmentVariableswith your interactive shell on the same user account. - Model split: If the first five steps stay clean, read provider 429 counters, long-context flags, and tool gate logs before touching pairing data wholesale.

openclaw pairing list

openclaw doctor

openclaw gateway status

When multiple instant messengers are enabled, shrink blast radius by golden-lining a single channel during triage, then re-enable others after the silent failure disappears.

For teams that also run CI on the same Mac, schedule heavy xcodebuild bursts away from IM webhook validation windows so disk latency does not masquerade as networking issues.

4. Three citable metrics

- Probe failure rate: Rising

channels status --probefailures with flat CPU implicates tokens, callback URLs, or vendor-side throttles before model code paths. - Pending pairing depth: A chronically non-zero queue signals human-in-the-loop approval debt; process change beats nightly cron deletes.

- Group versus DM success gap: When group delivery rates lag direct messages, mention and visibility fixes outperform buying another host.

4.1 Playbooks that survive audits

Three patterns recur in 2026 postmortems; pick the narrative that matches your outage instead of improvising a fourth.

Playbook A — IM-first freeze: Freeze model and tool changes for one hour, run only probes and pairing commands, and capture Slack or Discord delivery receipts. If silence disappears when mention rules are temporarily relaxed in a sandbox channel, you have enough evidence to open a product ticket instead of a Sev1 infra bridge.

Playbook B — Gateway identity alignment: Snapshot the plist that launches the gateway, diff it against the shell profile used for manual openclaw invocations, then align HOME, config paths, and token file permissions. Finance-friendly Mac leases reward this discipline because the same host often cohosts CI and agents; drift there creates ghost incidents that never show up in cloud-only monitoring.

Playbook C — Provider fallback rehearsal: When probes stay green yet transcripts show throttling language, rehearse documented downgrade paths for long-context or high-token models before declaring the IM stack broken. Teams that rehearse quarterly spend less weekend time re-reading vendor status pages.

Across playbooks, annotate every command with runner identity—daemon user versus human SSH—to stop debates about which log file is authoritative.

4.2 Technical depth: why logs disagree

Gateway logs may show handled events while customers see nothing because acknowledgement paths differ from user-visible replies. Teach responders to read sequence identifiers and correlation IDs rather than scanning for the word error only. When Discord or Slack retries deliveries, duplicate suppression rules can hide the second attempt unless you widen the trace window.

Corporate HTTP proxies and TLS inspection boxes also create asymmetric failures: outbound model calls succeed while inbound webhooks stall, or vice versa, depending on certificate pinning and SNI routing. Dedicated Mac nodes with static egress documentation simplify conversations with corporate network teams compared to ever-shifting laptop hotspots.

5. FAQ

Should I restart the gateway first? If probes and doctor stay clean, restarts mostly hide unsaved config drift; finish the matrix first.

How do I keep Matrix from confusing Slack triage? Temporarily disable nonessential channels until one messenger path is golden, then add complexity back with checkpoints.

Should pairing approvals live in chat? Prefer a documented on-call rotation with a backup approver; chat-only approvals become single points of failure during holidays.

6. Conclusion

Silent channels while lights stay green are usually policy or permission issues upstream of the model, not sudden model amnesia. Splitting layers turns Mac VPS pager noise into auditable steps.

Relying on a laptop session or ephemeral containers makes it hard to reproduce the intersection of launchd, IM callbacks, and long-lived tokens; what works on a developer desk rarely equals production plist truth. For teams that need Apple Silicon hosts where SSH, launchd, and gateway identity line up with IM ingress, leasing VPSMAC Mac cloud nodes is usually closer to the root cause than chasing another API bundle without changing topology.