2026 OpenClaw : recherche web Brave / Parallel / Tavily, quotas Mac cloud et web_search/web_fetch

Qui, quel problème : les équipes OpenClaw sur Mac cloud ou laptops voient des erreurs floues, des factures API surprenantes et des fetch rouges sans savoir si la recherche, la sortie ou le TLS vient en premier. Ce que vous obtenez : séparer web_search (découverte) de web_fetch (lecture), choisir Brave/Parallel/Tavily via une matrice, durcir clés et sortie sur un nœud Mac dédié. Structure : liste numérotée, deux tableaux, plus de cinq étapes, métriques, exemples latence/coût, FAQ/HowTo JSON-LD. Variables selon votre version OpenClaw.

Sommaire

1. Summary: web_search vs web_fetch

web_search answers discovery—queries return candidate URLs and snippets. web_fetch answers deep read—pulling and cleaning a single page. Failures look different: search issues usually trace to API keys, quotas, or regional policy; fetch issues trace to anti-bot rules, TLS interception, redirect chains, or shared egress IP reputation on Mac cloud. Splitting the two avoids pointless reinstalls. Typical 2026 stacks pair a general search API (e.g., Brave) for baseline cost control, Parallel-class APIs when agent-grade citations justify premium spend, and Tavily-style options where fast integration matters. On Mac cloud the hard part is not picking a brand—it is key rotation, ensuring the daemon user inherits env, whether corporate proxies allow all required domains, and stopping 429/402 or empty results from being misread as “the model got dumber.” Models may also silently fall back to memory when tools fail, so surface tool errors structurally instead of trusting final prose alone.

At the protocol stack, each web_fetch success depends on DNS → TCP → TLS (SNI, cert chain, optional mTLS) → HTTP (redirects, compression, charset) → decode and byte caps. If corporate proxies or shared egress rewrite any hop, OpenClaw sees flaky timeouts or truncation—often misattributed to “bad search quality” if you do not decouple stages.

2. Pain points

- Keys on the wrong layer: exported in an interactive shell but not in launchd/systemd/Docker—symptoms say “missing key” while SSH shows variables.

- Quota drift: free tiers return empty or vague errors; without per-hour counters teams blame prompts.

- Egress and SNI: split policies for search APIs vs target sites; shared cloud IPs may trigger captchas unrelated to the provider.

- Fetch blamed first: “cannot read web” when search never returned a usable URL or URLs need login cookies.

- Provider switches without cold restart: stale env or routing causes intermittent success—diff configs and restart instead of rewriting system prompts.

3. Provider matrix

Use this in architecture reviews (pricing and terms are vendor-specific for 2026). If you egress both domestically and globally, whitelist search API hostnames separately from high-traffic documentation domains you fetch often—otherwise you get flaky timeouts that look like app bugs.

| Dimension | Brave Search API (typical) | Parallel-style agent API | Tavily-style (typical) |

|---|---|---|---|

| Value | Broad index, straightforward | Higher-quality agent retrieval | Fast onboarding, rich tutorials |

| Cost | Often moderate tiers | Premium for quality/latency | Per-call—watch spikes |

| Compliance | Respect robots/ToS + API terms | Enterprise review for logging/retention | Same—watch query logging |

| vs fetch | Returns URLs; fetch still needed | May include excerpts; fetch may help | Similar validation path |

4. Five-step rollout

- Confirm service identity: run doctor/config as the same user as the daemon to avoid split-brain keys.

- Minimal web_search probe: single query, no long reasoning—verify structured fields (URL/title).

- Single-URL web_fetch probe: stable HTTPS doc site; capture TLS errors vs HTTP codes.

- Layer proxies and multi-provider: validate with

curl -Iunder the service account before OpenClaw. - Metrics and alerts: hourly search counts; alert on sustained 429/402; link to observability and Docker guides.

- Regression on real prompts: three business query classes (version, error string, comparison) with expected domains.

5. Hard metrics

- 429 backoff: exponential backoff and lower concurrent tool calls; multi-turn repeated searches multiply cost.

- Timeouts: different limits for search vs fetch; cap bytes to avoid gateway OOM (ties to Docker Exit 137).

- DNS/IPv6: some images prefer AAAA; if IPv6 paths break, force IPv4 or reorder resolution.

- User-Agent / robots: default UAs may be blocked—use vendor guidance and audit changes.

- Redirect depth: track final URL; prevent infinite chains from stalling workers.

6. Latency and cost rough cuts (examples)

Figures below are order-of-magnitude planning aids—not benchmarks. Replace with measurements from your region, model, and provider invoices.

| Scenario (illustrative) | web_search latency | web_fetch latency | Cost intuition (typical 2026 tiers) |

|---|---|---|---|

| One simple query + one doc page | ~0.3–2.0 s (region/provider) | ~0.5–3.0 s (page size/TLS) | Per-call metering; 429 risk rises with burst concurrency |

| Every dialog turn triggers search | Adds linearly with model TTFT | Multiple URLs per turn worsen tail latency | Correlates with duplicate queries—cache or dedupe |

| Corporate HTTPS inspection | May add hundreds of ms per hop | Large pages + decompress near timeout | Engineering time often exceeds API unit price |

Instrument search → fetch → summarize with one request id and three segment durations so “it feels slow” maps to model, tools, or network—same playbook as structured JSONL observability articles on this site.

7. Error triage

| Symptom | Likely layer | First action |

|---|---|---|

| Missing/invalid key | web_search provider | Service env; doctor; cold start |

| Null URLs in response | Parsing/version skew | Align versions; capture raw JSON |

| 429 / quota | Provider | Console usage; throttle; tier change |

| TLS handshake failed | fetch or MITM | curl -v; corporate roots; proxy |

| 403/503 on specific sites | Anti-bot | Mirror URLs; slow down; policy check |

| Truncation/mojibake | Encoding pipeline | UTF-8; max bytes; skip binary |

| Fails only in container | Docker DNS/proxy | curl inside container; see Docker guide |

S'appuyer sur l'automatisation navigateur portable ou des scripts jetables pour de la lecture web en production impose trois limites : sommeil, verrouillage et sessions GUI imprévisibles ; clés API et proxy liés aux utilisateurs interactifs plutôt qu'au processus gateway ; traces faibles sur sortie et TLS—les 429 ou handshakes deviennent redémarrage. Du Docker seul sans volumes/DNS/héritage clair ajoute namespaces réseau, UID et OOM/Exit 137 qui ressemblent à de la qualité de recherche.

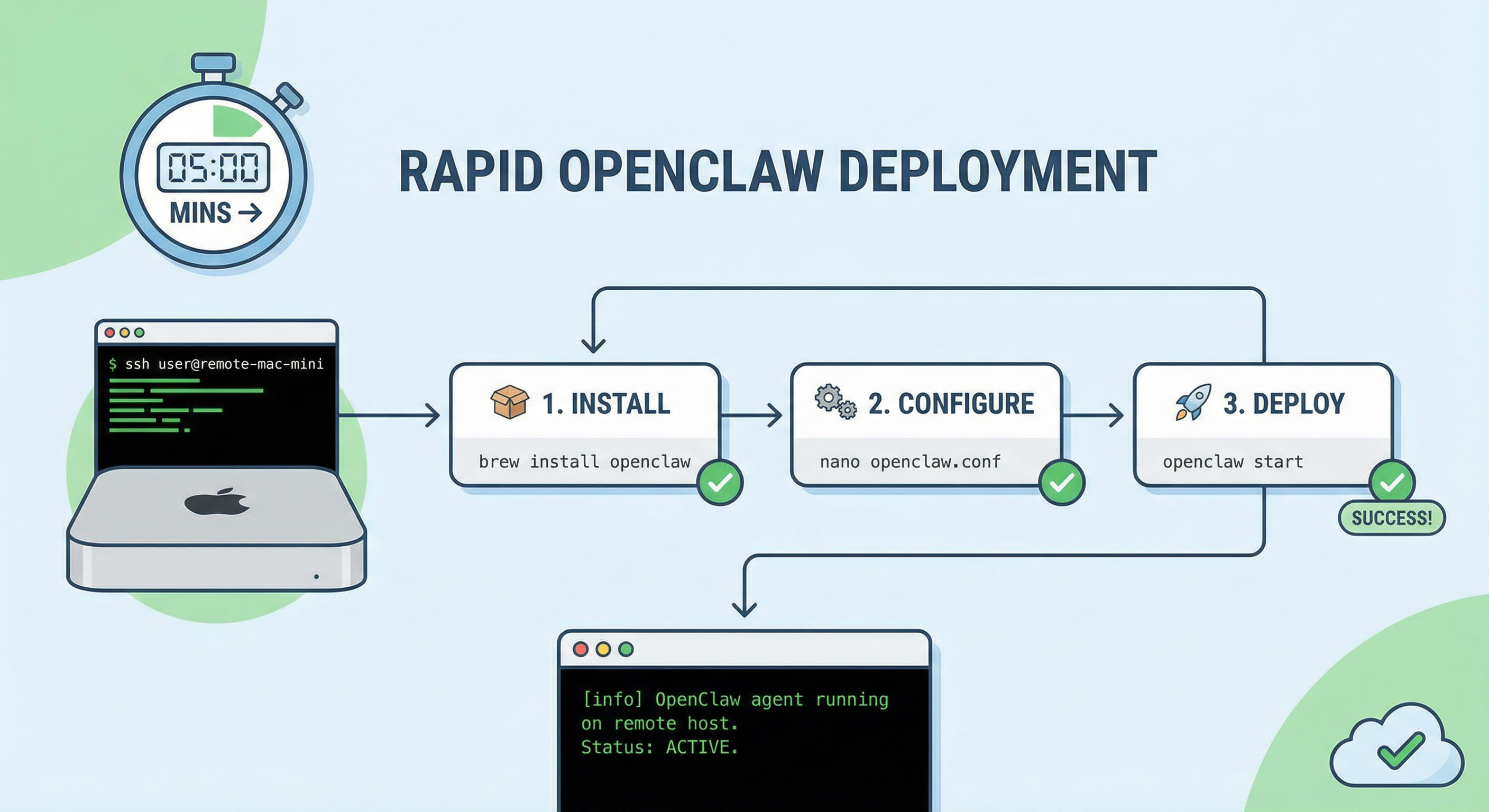

Héberger OpenClaw sur une Mac cloud dédiée avec SSH façon VPS Linux regroupe clés, listes blanches de sortie et launchd/compose—une identité d'exécution fiable et outils Apple. Pour des agents stables qui lisent le web, louer la Mac cloud VPSMAC est en général le meilleur compromis : recherche et fetch sur capacité contrôlable, même checklist de release que canaux et modèles. Gateway pas prêt ? Guide déploiement cinq minutes d'abord, puis durcir ici.